- Generative AI use cases

- AI tooling for CFOs

- Extracting value from AI

In the 1980s, the economist Robert Solow made an observation that defined a fundamental debate around the implementation of technology:

"You can see the computer age everywhere but in the productivity statistics."

During this period, amid the era of rapid information technology development, companies were spending billions on PCs, networking equipment, and enterprise software, yet US national productivity growth remained stubbornly flat.

The phenomenon was defined by Erik Brynjolfsson when he coined the term 'Productivity Paradox'. It describes the slowdown in productivity growth despite IT adoption, caused by time lags, measurement errors, or poor implementation. The concept is not unfamiliar. Historical parallels can be drawn with other revolutions, such as the lag of nearly eighty years between the invention of electricity and the creation of the lightbulb. When it came to the implementation of IT in the 1990s, it took more than a decade and a complete rethinking of how organisations structured work around technology, before the gains really materialised at scale.

The same paradox is now resurfacing with Generative AI, what some researchers are calling 'The GenAI Divide'. Generative AI adoption is accelerating rapidly, yet the measurable productivity gains remain elusive for most organisations.

5% of companies are realising significant value from AI, compared to the 95% that are not.

History raises the question: do companies need to wait for the widespread productivity gains to arrive, or is this era of technology fundamentally different?

We would suggest the gap is an implementation issue, where organisations are not yet applying AI in ways that truly integrate across functions and in turn can be properly used to shift the unit economics of the business.

What is inhibiting value creation?

There are many inhibitors, however at the centre of this implementation problem is the learning gap.

The learning gap operates on a couple of levels. The first is more technical, where AI tools do not learn effectively from usage either because the tool doesn’t have the capability to properly integrate and capture enough context (i.e. a complete set of an organisation’s data) or because organisations are only training tools on limited pilot data. Particularly in the experimentation phase, tools can lack feedback loops and integration depth needed to eventually distribute broadly.

The second could be classed as psychological. The learning gap is also the distance between what companies experiment with and what they are able to deploy at scale, driven by the culture of adoption. This is shaped through governance and leadership, more than the capabilities of the technology itself.

The gap as a result encompasses the entire chain: whether the tool integrates with existing systems and learns from usage, whether the organisation has created the conditions for people to use it effectively, and whether leadership has committed to scaling tools for proper use.

Where the money is going versus where the value lies

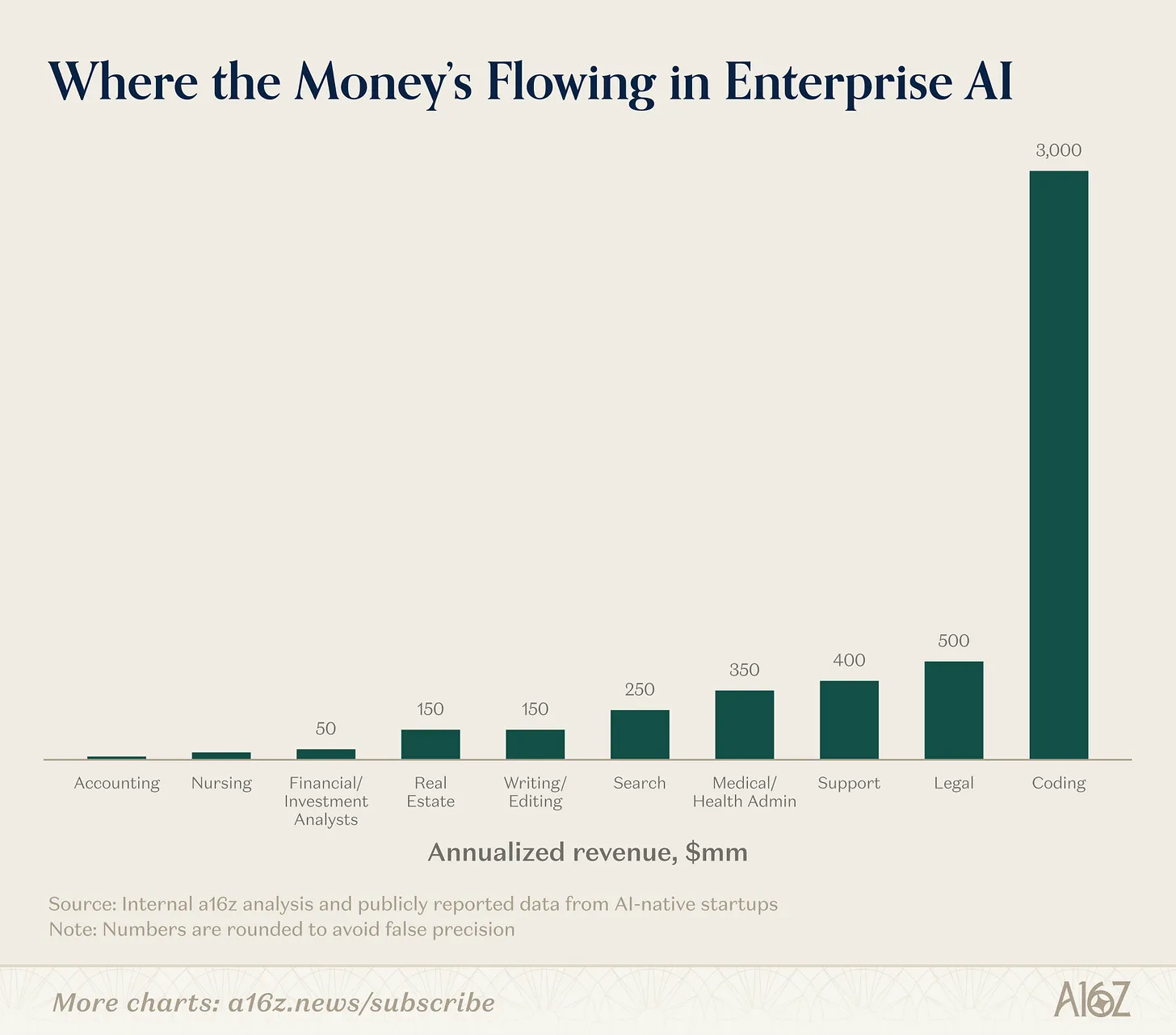

Unsurprisingly, the overwhelming majority of AI investment is flowing into coding assistance. Developer communities adopted generative AI use cases quickly given the tooling ecosystem matured the fastest (i.e. the creation and use of tools such as Cursor and Claude Code). The engineering use case has low learning gap friction because feedback loops are short, memory capabilities strong and this particular cohort of employees are incentivised given the immediate productivity gains.

The flow into coding assistance also makes sense given a majority of new start ups entering the market are in the tech industry. This lends itself to a higher proportion of software engineers in the workforce.

Source: a16z, AI Adoption By The Numbers

Value generated through this domain is obvious. Today’s largest companies wouldn’t exist without AI coding assistance (i.e Snowflake, AppLovin, CrowdStrike) and some of the fastest growing companies globally are AI coding assistance products (i.e. the Stockholm-based AI "vibe-coding" startup Lovable was valued at $6.6 billion following a $330 million Series B funding round in Dec-25).

If AI adoption in coding is already creating value, then where is value lost?

For many companies, software engineering is not or no longer the function where the greatest operational drag exists. Instead, one of the most overlooked use case is the back office: finance, accounting, and parts of operations. Although each have faced it’s own technological disruption, these functions still run on fragmented workflows, manual data entry, and reconciliation cycles that consume valuable time of skilled people every month.

The irony is that the back office is precisely where AI should work well given its structured, repetitive and high volume nature.

The finance function is both an art and a science, but too much of their time is dedicated to science

But one of the critical differences to a function like engineering where coding is building new outputs on purpose or building to integrate into existing platforms, is that for gains to materialise via the implementation of AI in the back office, proper access and integration to the data is required. For most finance teams, that data sits across six different platforms that do not necessarily communicate with each other. Bank portals, ERPs, accounting systems, spreadsheets, email threads each holding a piece of the picture.

The result is that the technical part of the learning gap (proper integration or capture of total context) inhibits proper implementation and in turn, value. Companies are buying standalone products that add to an already fragmented stack, creating more work to get running rather than less. The result is the productivity paradox: tooling bought to enhance workflows ends up underused, misunderstood, or ignored entirely because teams don't have time to learn a new product.

You’re a CFO looking to extract value, here’s the steps we’d suggest…

One of the lessons from the original productivity paradox was that technology drives growth when organisations redesign their workflows around it. The same applies in the era of GenAI.

Write out the three biggest pain points of your finance team

Before evaluating any tool, get specific about where time and accuracy are actually being lost. Is it reconciliation across multiple bank portals? FX rate consolidation from different providers? Cash reporting that takes days because the data lives in six places? Or constantly renewing term deposits? Starting with a clear, written list of these pain points forces specificity when it comes to assessing tools.

Look for a product that serves all pain points and integrates across your key tech stack

The goal is to reduce fragmentation of tooling, so with the pain points in mind - search for a single platform that will be most effective in assisting across all areas and ensure that the tool can integrate across the systems you already rely on. When AI has a connected view of all your financial data, the technical side of the learning gap narrows significantly.

Make the chosen tool an executive mandate

Once a tool moves out of pilot, it needs proper executive sponsorship to scale. This means ensuring employees are fully trained, have complete access to the product, and understand that AI upskilling is an ongoing expectation rather than a one-off onboarding exercise. Have policy in place that employees can easily access and make it a mandate that outputs come from whichever tooling is being deployed.

About Primary

Primary provides modern treasury management solutions for complete cash visibility, idle cash optimisation, and FX risk management - all in one platform.